Computational structured illumination for gigapixel imaging

High-content microscopy targeting high-resolution imaging across large fields-of-view (FOVs) is an essential tool for large-scale biological studies, especially those that require intracellular resolution across a visualized FOV of whole cell populations. For such studies, it is important that imaging tools allow high-content imaging at fast data-rates to enable efficient large-scale measurements of the sample.

Current industry-standard solutions typically accomplish this by physically translating a sample while visualizing with a high-resolution but small FOV imaging lens, and then digitally stitching these views together into a final high-content image. Unfortunately, this strategy requires 1) long acquisition times (due to long translation requirements), 2) separate auto-focusing requirements (due to sample axial drift over large scan ranges), and 3) high cost (a "quick" and "stable" automated translation stage can run > $10k)!

question for this project: Can we accomplish this task in a way that is considerably cheaper and easier??

To be fair, we are not the first to ask this question. Many groups have explored exactly this space and have come up with ingenious strategies that have found remarkable success. Three strategies that I am personally super impressed by are described below:

- Lens-less, on-chip microscopy: The sample is positioned fairly close to the image sensor, with no lens system in between. Depending on exactly how close this positioning is, the object can be reconstructed almost directly from the sample's shadows falling on the sensor (assuming < 1um separation between sample and object) or computational analysis from the interference between the sample's scattered and transmitted waves. By utilizing principles from fields like pixel super-resolution and holography, these imaging techniques have reported NA/FOV ratios of ~0.8/20mm^2 and 0.1/18cm^2, which are both in the gigapixel range.

- Fourier ptychographic microscopy (FPM): Originally introduced in 2013 by Zheng et al [2], FPM made waves in the computational imaging community by enabling robust speckle-free amplitude and quantitative-phase biological imaging without holography or any mechanically moving components. By using concepts from phase-retrieval, synthetic-aperture microscopy, light-field imaging, and adaptive optics, FPM iterates through a sequence of low-resolution, large FOV images acquired of the sample under angular illuminations, to reconstruct a final high-resolution, large FOV image. Particularly exciting about this is that FPM's computational framework allows space-varying aberration recovery, which is a huge deal for imaging across large FOVs with space-variant aberrations. The original FPM implementation achieved 0.78 um resolution across a 120 mm^2 FOV, though these stats have been steadily improving [3,4].

- Lenslet-based fluorescence microscopy: This imaging concept has been around for a while, but the work that got me really excited is the one presented by Orth et al [5]. This work decoupled the conventional resolution/FOV tradeoff of a standard imaging lens by using an array of smaller lenslets. At any point in time, each individual lenslet focuses light onto a single point on the sample and subsequently collects the emitted fluorescence, which is eventually focused into a dispersed point onto the camera. The two advantages of this system is 1) a highly parallelized imaging scheme where the fluorescence is simultaneously collected from thousands of sample points spanning centimeter-scale areas and 2) a wedge prism disperses the spectra from each sample point across multiple camera pixels, allowing simultaneous spectral measurements for each collected point. This work reported 1.30 Gpixels of spatial imaging content, though this number dramatically grows to a whopping 16.8 Gpixels of imaging content once spectral data is taken into account.

Unfortunately...

These technologies have not demonstrated cross-compatibility between diffractive and fluorescent imaging contrast, and are thus not suitable for high-content studies requiring complementary analysis from different modalities. Is there a robust strategy that applies for a general class of samples, without requiring specific properties of fluorescence or diffraction?

Our solution...

Let's use structured illumination microscopy! From my work during my PhD stint, I have convinced myself that SIM is unique among the super-resolution techniques in that it works well with both fluorescence AND diffraction (absorption, quantitative-phase, refractive index, etc). So why not use it here?

Methods...

Not to get too bogged down in details, but the resolution improvement in SIM is dependent on both the illumination and detection numerical aperture. Typically, SIM uses the same aperture for both illumination AND detection, so its resolution enhancement is limited to a factor 2 gain. However, if we use a lower-aperture detection lens (which often allow larger FOVs), we can in effect attain much greater resolution gains over the whole FOV of the lens, assuming that we can maintain the illumination aperture.

Results

We used scotch tape as our patterned element, and simply illuminated the tape with a laser-pointer after positioning it directly adjacent to the sample. Thus the speckle features generated at the sample via multiple scattering through the scotch tape could conceivably reach wavelength-scale sizes, without being blurred out by a lens system. Though these features are not directly observable or known on the detection side of the imaging system, we can use computational tricks to optimize for them (yes, I know this is a hand-wavy explanation :P - the rigorous explanation is described in a recently submitted paper)

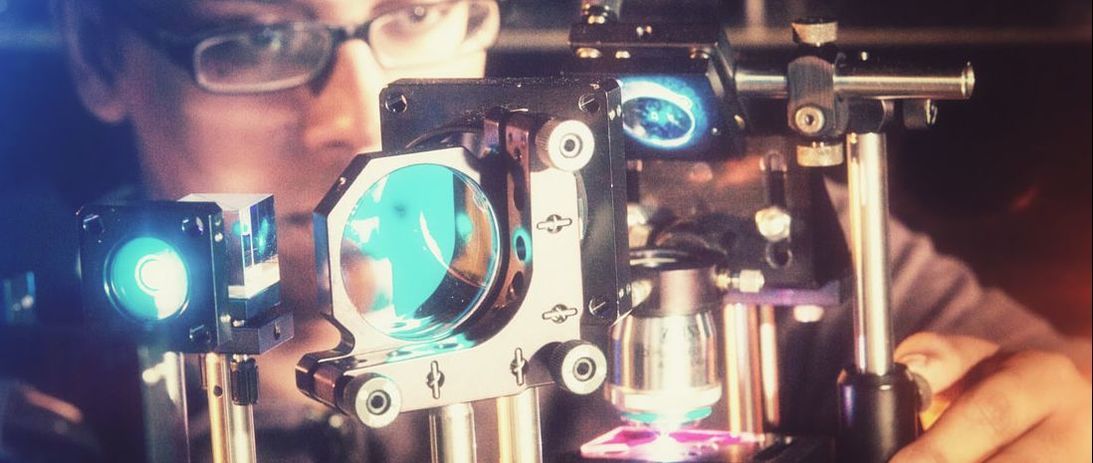

The super cool thing about this concept is that you can use optimization algorithms, instead of intense hardware and analytic solvers, to iteratively converge towards the super-resolution solution. To give an idea of the immense simplicity this allows in the context of SIM, let me point out that conventional SIM microscopes require spatial-light modulators, microscope objectives, polarization controllers, and a fairly complex set of optical components. In contrast, our computational version of SIM requires only a laser pointer, scotch-tape, and a simple 4-f lens system (which can be built within a few hours by any novice). The illumination measurement, translation calibration, aberration correction, and final super-resolution reconstruction are all done computationally, without requiring dedicated optics hardware. I have compared below a standard SIM system, introduced by Kner et al. [6], to the one we use. Clearly, our system is laughably simple compared to the standard SIM system. However, we view this very simplicity as the advantage.

Needless to say, preliminary results look promising! I have attached Gigapan views of a monolayer sample of 1um microspheres. A low resolution, large-FOV (Nikon 4x, 0.10 NA) imaging objective was used as the main imaging element. The top view shows the microspheres through the native resolution of the imaging objective, while the bottom view shows the resolution improvement enabled by simple scotch-tape (and computation) - worlds apart!

[1] Greenbaum, A., Luo, W., Su, T. W., Göröcs, Z., Xue, L., Isikman, S. O., ... & Ozcan, A. (2012). Imaging without lenses: achievements and remaining

challenges of wide-field on-chip microscopy. Nature methods, 9(9), 889.

[2] Zheng, G., Horstmeyer, R., & Yang, C. (2013). Wide-field, high-resolution Fourier ptychographic microscopy. Nature photonics, 7(9), 739.

[3] Tian, L., Li, X., Ramchandran, K., & Waller, L. (2014). Multiplexed coded illumination for Fourier Ptychography with an LED array

microscope. Biomedical optics express, 5(7), 2376-2389.

[4] Tian, L., Liu, Z., Yeh, L. H., Chen, M., Zhong, J., & Waller, L. (2015). Computational illumination for high-speed in vitro Fourier ptychographic

microscopy. Optica, 2(10), 904-911.

[5] Antony Orth, Monica Jo Tomaszewski, Richik N. Ghosh, and Ethan Schonbrun, "Gigapixel multispectral microscopy," Optica 2, 654-662 (2015)

[6] Kner, P., Chhun, B. B., Griffis, E. R., Winoto, L., & Gustafsson, M. G. (2009). Super-resolution video microscopy of live cells by structured

illumination. Nature methods, 6(5), 339.